Not too long ago NetApp, Microsoft, and Interxion came together to sponsor a hybrid cloud lab with NetApp Private storage for Azure nearby my office in Amsterdam. When someone was needed to set this up I jumped at the chance!

For those unaware of the solution, the basic premise is to use the Hyperscaler providers for elastic compute while maintaining ownership of the data and gaining the rich data management features of NetApp. So use cases like adopting a ‘cloud first’ strategy, DR in the cloud, dev/test in the cloud, cloud bursting, cloud hopping, and more can be experienced by customers and prospects first-hand in this lab. Contact me if interested to participate!

Anyway, this post isn’t about the ‘why’ but rather the ‘how’, and more specifically, the ‘how’ of getting the most out of the Azure ExpressRoute service.

After the hardware was shipped we had a short onboarding at Interxion (awesome service by the way; thanks Hans!), installed a switchless clustered Data ONTAP system, a couple of Cisco servers, and a Cisco Nexus switch in our cabinet. Next, Interxion pulled a pair of fibers through and hooked us up to their Cloud Connect platform, which is our gateway to Azure (and more providers in the future). With the physical tasks done there is no need to visit the data center again.

Next we setup networking within Azure, and provisioned an ExpressRoute (ER) to our cabinet. The steps are in NetApp Tech Report TR-4316. The guide has a few small mistakes or outdated info (cloud moves fast!) but nothing that couldn’t be solved by a actually reading error messages and a bit of Google. After setting up an SVM with IP addresses on the Azure network it didn’t take long to access iSCSI, SMB, and NFS storage from Azure VMs.

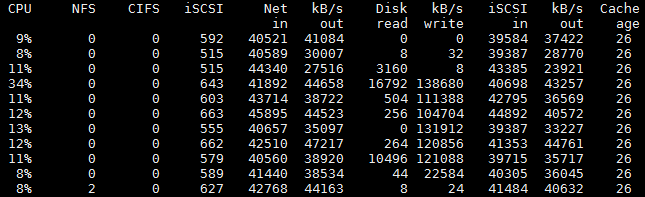

Then the fun started. To start we had a 200Mbit ER and we could max that out pretty easily. We boosted the speed to 1000Mbit (you can modify an existing circuit up but if you want to go down you have to destroy and create a new one so beware!) and were expecting to be able saturate the link. Expecting…but we just couldn’t do it.

How to optimize for Azure ExpressRoute throughput

Choose a VM with lots of resources. If you haven’t worked with a hyperscaler before it might come as a surprise that even though you have a 10Gbit vNIC it doesn’t mean you have 10Gbit worth of network access. Far from it actually. I was unable to find any official listing or SLA for bandwidth provided, but this old table seems to be reasonably correct for A1-A3 as confirmed by some basic testing. I actually saw a bit more with the A4 VM (8 cores, 14GB RAM) coming in at about 2Gbit. So moral of the story, if you need bandwidth go for a VM with lots of resources like an A4 or equivalent in the DS line.

Remember the Bandwidth Delay Product. Although ExpressRoute is low latency (<2ms in our case) it is not LAN latency (50-200us is typical). For this reason we need to insure the TCP window size is large enough to not limit us on a per session basis. For iSCSI, Clustered Data ONTAP has a default window size of 131400 bytes. If you calc the BDP with this latency and window size it maxes out at about 64MB/s. To go higher we can adjust this on the NetApp up to 262800 bytes at the SVM level using:

cdot::> set advanced cdot::*> iscsi modify -vserver -tcp-window-size 262800

The value will take effect for new connections, so either stop/start the iscsi service, down/up each iscsi lif, or reboot your hosts. Check your connections have the correct TCP window size using

cdot::> iscsi connection show

Remember TCP congestion avoidance. If packet loss occurs (ex: some intermediate device decides to police our traffic) then TCP congestion avoidance kicks in and starts sending data at a lower rate. Over time the rate increases until packet loss occurs and again the data rate is reduced to avoid congestion. To cope with packet loss you want at least a handful of TCP sessions active because each one one implements its own TCP congestion avoidance allowing you to achieve higher total throughput and get as close as possible to the promised bandwidth. When using iSCSI simply make additional iSCSI sessions from the client. You can use the same source/dest IP if you like, although if you want to get both links in the ER active you want at least 2 unique IP address pairs. In my testing I needed 4 to 12 sessions, with MPIO set to Least Queue Depth policy, to approach the promised bandwidth from a single host.

Configure BGP to balance traffic over both links. ExpressRoute includes two links for redundancy and traffic from Azure will be balanced over both links. For traffic going to Azure this is determined by the BGP configuration made on your own switch, which by default uses a single path. To enable multiple paths on a Cisco Nexus switch add the ‘maximum-paths’ setting like this:

router bgp 64512 vrf vrf-100 address-family ipv4 unicast network 192.168.100.0/24 maximum-paths 2Choose an appropriate vNET ExpressRoute Gateway; SKUs are available with throughput ranging from 500-2000Mbps. You are allowed to have 4 vNET Gateways per vNET, but each must be connected to an ExpressRoute in a different location. So in practice it means for a given vNET there is a 1:1 relationship between vNET gateways and NPS locations. It also means that for a given vNET your maximum throughput is limited to 2000Mbps. I got this info from a colleague and have to say that in my testing I didn’t specify a SKU and saw around 2000Mbps which is more than expected from the table. So something to be aware of if testing.

What throughput to expect

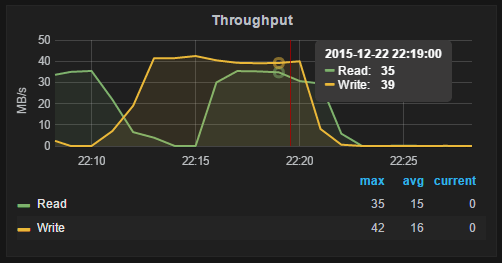

With the above setup and a 1Gbit ER I was able to achieve about 90MB/s reads + 90MB/s writes from a single host, and 200MB/s when writing from two hosts. Unfortunately at the time I had the 1Gbit ER up I didn’t know of the BGP maximum-paths setting yet, and I downgraded my link to save $$, so I wasn’t able to verify max read throughput with both both links active. With my current 200Mbit ER though I can achieve 40MB/s reads (2 x 200Mbit), so I assume that with a 1Gbit 200MB/s reads would be possible.

Still, with the smallest 200Mbit ER and two hosts generating workload I can fill both directions pretty well :-)

Comments are always welcome!